AI content generation flips the usual SEO workflow. Instead of planning a few pages at a time, you can spin up hundreds in days. Without clear internal linking strategies, that growth turns into a messy internal link graph that wastes crawl budget and weakens topical authority. The solution is to design link rules and silo architecture first, then let AI work inside that structure.

Why AI Generated Sites Break Traditional Internal Linking Models

Handcrafted sites grew slowly. You added a page, linked it from a menu or a relevant article, and the structure stayed understandable. AI content generation workflows change that. A single campaign can create hundreds of URLs at once, often with inconsistent paths and ad hoc links that do not reflect a deliberate silo architecture.

Search engines read those links as signals about topics and importance. On AI heavy sites, random cross linking and deep folders can confuse how crawlers interpret topical clusters and page rank flow. The result is wasted crawl budget, shallow coverage and weak anchor text distribution that fails to support your main themes.

Unmanaged AI content growth also creates orphan pages and excessive crawl depth. Important guides may sit four or five clicks from the homepage. That slows discovery and dilutes equity. Over time, the internal link graph becomes so noisy that even strong content struggles to earn stable visibility.

Designing An AI Assisted Silo Structure Before You Generate Content

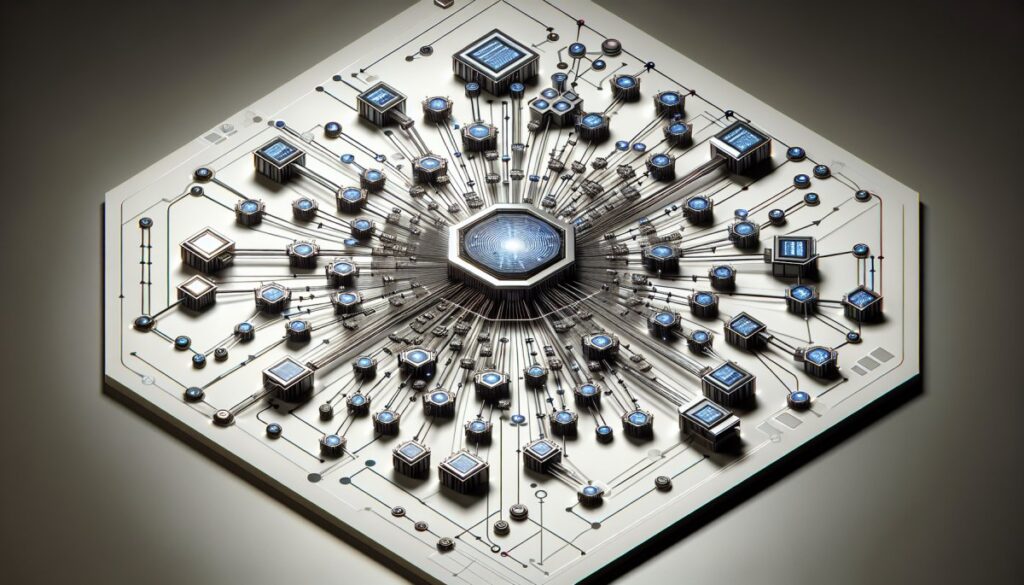

Start with a human designed topic map. Group related themes into hubs, spokes and supporting resources. Each hub targets a core topic. Spokes cover subtopics. Support assets handle narrow how to queries. This hub and spoke model gives you a clear URL taxonomy and a blueprint for every future internal link.

Use AI tools to propose variations and gaps, but do not let them dictate architecture. You decide the folder structure, canonical URLs and how many levels each silo needs. AI then fills content within that framework. This keeps AI content generation workflows aligned with a stable internal link strategy.

Set guardrails for URL patterns before you publish. Define consistent folders for each silo, rules for slugs and how you handle versions or series. That consistency helps search engines understand crawl depth and topical clusters, and it makes later link auditing far easier.

Operationalizing Hub And Spoke Internal Linking For AI Content At Scale

Create a simple link policy. Every spoke links up to its hub, across to two or three closely related spokes, and down to any deeper support assets. Hubs link down to all spokes and key support pieces. Support pages always link back up to their parent spoke and hub. This keeps the internal link graph tight and predictable.

Encode those rules directly into your prompts. For example, ask the model to suggest three internal links to related spokes and one link back to the main hub using descriptive anchor text. This bakes internal linking strategies for AI generated content into every draft instead of bolting links on later.

Use automated crawlers to check for orphan pages, dead ends and broken paths. Tools that visualise the internal link graph make it easy to spot silos that are under linked or over connected. Regular audits keep your hub and spoke internal linking models healthy as new AI content goes live.

Improving Crawlability And Topical Authority With Structured Link Signals

Structured internal links help search engines prioritise crawl paths. New or updated AI content should receive links from hubs and high authority spokes so crawlers find it quickly. Keep crawl depth shallow for important guides by linking them from navigation, hubs and relevant high traffic articles.

Anchor text should be descriptive but natural. Avoid repeating the exact same phrase across hundreds of links. Vary wording while keeping the core concept clear so anchor text distribution looks organic yet informative. This helps search engines understand relationships between pages and strengthens topical authority.

Measure impact with log file analysis, analytics and coverage reports. Logs show how bots move through your site and where crawl budget stalls. Analytics reveal which internal paths drive engagement and conversions. Coverage and index reports highlight silos that need stronger internal links or consolidation.

Connecting AI Driven Internal Linking To Your Broader SEO Strategy

Internal links should support revenue, not just traffic. Map each silo to specific lead funnels or product lines. Ensure hubs and key commercial pages receive consistent internal links from AI generated content so page rank flow and user journeys both point towards business outcomes.

Set governance workflows for ongoing maintenance. On a schedule, review AI content performance, identify thin or overlapping pages and decide whether to merge, redirect or deindex. Consolidating ten weak posts into two strong guides often simplifies the internal link graph and improves equity flow.

When pruning or redirecting, update internal links so they point to the best canonical destination. This keeps crawl depth under control and prevents circular paths. Over time, a disciplined governance cycle keeps your AI assisted silo structure for SEO clean, efficient and easier to scale.

Thoughtful internal linking turns AI content from a liability into a durable asset. By defining silo architecture, link rules and prompt patterns before you generate at scale, you protect crawl budget, clarify topical clusters and support revenue pages. If you want to design AI first internal links that search engines trust, explore AI driven SEO frameworks with InjenAI or book a strategy session with our team.

FAQs

How do I keep internal links under control when AI generates hundreds of pages?

Start with a fixed topic map and silo structure, then enforce simple link rules. Every new page must belong to a hub, link up to that hub, across to a few related spokes and never create its own random folder. Use crawlers to spot orphan pages and run regular clean ups to remove or redirect low value URLs.

Can AI tools reliably suggest internal links without creating spammy patterns?

Yes, if you give them clear constraints. Provide a list of approved hubs and spokes, define how many links to suggest and require varied, natural anchor text. Always review suggestions before publishing and avoid using identical phrases across many pages. Human oversight plus consistent rules prevents spammy or repetitive patterns.

What is the best way to audit internal links on a large AI driven site?

Combine a full site crawl with log file analysis and search console data. The crawl reveals orphan pages, excessive crawl depth and broken links. Logs show how bots actually move through the site. Search console highlights coverage gaps and underperforming clusters. Together they guide where to add, remove or update internal links.